The Importance of Reading Journal Articles Critically: A Research Paper Review

Context

Recently, an article published in the Orthopaedic Journal of Sports Medicine used a novel study design and experiment to look at lighter weighted baseball training and its relation to velocity and injury risk. I have contentions with a few of the arguments made and how the information was presented.

I figured this would be an excellent opportunity to write a research article review of the paper. While writing, the review turned into a broader discussion of baseball research and the importance of reading articles critically. Here is the citation of the article I will discuss for those interested in following along.

Citations:

Erickson, B. J., Atlee, T. R., Chalmers, P. N., Bassora, R., Inzerillo, C., Beharrie, A., & Romeo, A. A. (2020). Training With Lighter Baseballs Increases Velocity Without Increasing the Injury Risk. Orthopaedic Journal of Sports Medicine. https://doi.org/10.1177/2325967120910503

Before we begin, I think it’s important to cover some general thoughts on peer-review and research article reviews for those that might be unfamiliar. Such an intro might also avoid the potential of some of my points being misconstrued.

Research Article Reviews

Peer reviews and critiques are a vital part of the scientific method and research in general. If you don’t have a background in this practice, it may come across as rude or combative to disagree with what someone has written.

*Looks over at the hyper-defensive baseball development Twitter world*

It’s easy to be defensive when someone provides a counterpoint to your work. But in the world of science and research, it is not only encouraged and embraced but is a fundamental aspect of the process. There was a recent thread on Twitter from Carl T. Bergstrom, a professor of Biology at UW, that I believe put it best. Updating your models or research based on counterpoints from colleagues isn’t a sign of weakness—it’s literally “doing science.”

https://twitter.com/CT_Bergstrom/status/1243258502023229440?s=20

It is a separate thread topically, but I think it’s a great primer to my review of this article and represents a general perspective shift that the entire baseball community would benefit from.

Research reviews are written based on the writer’s interpretation of the paper and their understanding of the science surrounding it. The aim is to approach the original research objectively, but at the end of the day, we all have our biases and beliefs based on what we think we know within the field and the context of the argument. My disagreements/agreements can only come from my perspective, which is most likely different than the authors’. This highlights the importance of reading things critically and developing your own opinions about the information provided.

Last point on research reviews: The goal is to help the reader of this review understand the paper without having to read it themselves. It’s easy to pick up a paper and just run with the title and what’s written in the abstract. There is always a lot more to it than that, however. We’ll get into the specific methodology of the study in question and some concerns I have with it as we go, but, in general, as a reader of scientific literature, you should not merely take the abstract and title at face value.

I also want to preface the following review by saying that, although I have some critiques, I very much appreciate the work that went into this piece.

Research like this is not easy; it takes an unbelievable amount of time and work that most people don’t appreciate. The methodology was a novel way to go about it, and the documentation of that methodology and design was incredible. The authors made it easy as a researcher to understand and examine the way they went about things, which is also essential for future studies and the ability to replicate the original later on—another critical aspect of science!

With that, let’s get into it.

The Title

The title of this paper is a great place to start because it’s most likely the first thing that you read and the easiest place to get misled:

“Training with Lighter Baseball Increases Velocity Without Increasing the Injury Risk”

When I first read it, I immediately thought, “Wow, that’s a big claim,” and in my opinion, it’s a pretty misleading title for the general public. In general, I don’t have a problem with including some aspect of the results in the title, but I prefer that it describe the purpose of the study and what they researched within the paper. Usually, this drives people to read it themselves and formulate their own opinion on the topic. In the age of rapid information consumption, a title like this could easily mislead the public. A title that doesn’t do that would be something along the lines of:

“The Effects of Lighter Baseballs on Velocity and Injury Risk”

Not nearly as exciting, but far less misleading. Science and research should aim to help inform the community and provide knowledge on various subjects. When you simplify the title of an article to a claim like this, it seems far too easy to mislead the general public on the topic, especially if they don’t have a background in science and research. It’s exacerbated by how the baseball community generally consumes information through mediums like Twitter. If you’re scrolling through your timeline, as I’m sure many of you were when you came across this article, it’s easy to not read everything involved in the study and walk away thinking something along the lines of “welp, I guess lighter baseballs increase velocity and don’t increase injury at all.”

What the title really says is: “In the context of this study, and given the research parameters that were employed, training with lighter baseballs, among other things, seems to have increased velocity without increasing injury risk.” That is very different than the blanket statement:

Lighter baseballs = more velocity and no injuries.

The Paper

Alright, so let’s get into the study—the study design, the purpose/hypothesis, methods, etc.

Purpose/Hypothesis

“The purpose of this study was to determine whether a training program utilizing lighter baseballs could increase fastball velocity without increasing the injury risk to participants. We hypothesized that a training program with lighter baseballs would increase fastball velocity but not increase the injury risk.”

This is great and right in line with what you’d expect from baseball research right now: aiming to test and identify training modalities and programs that improve velocity while also evaluating their risk (potential for causing injury). I think this paper established its purpose well.

As a general commentary on the baseball research world and academia, it appears that there is this idea that when looking at training modalities and their relation to performance, velocity and injury risk are separate. When we talk about mechanics and training methods, we typically stay focused on velocity because it’s immediately tangible and measurable. Those in academia will sometimes not address velocity and focus solely on injury risk, stress, etc. I believe that to be a significant pitfall of other studies.

In my mind, velocity and injury risk both fall under performance when it comes to pitching. If you don’t throw hard, you’re going to have a harder time performing well, and if you’re injured, you’re not performing at all. So, any research or study that only looks at one or treats them independently fails the athlete. If we exclusively focused on research that reduced the risk of injury, we’d eventually conclude that the safest way to train and throw is to not throw at all. That is why I appreciate the purpose statement of this paper because it states that the researchers are looking at velocity and injury risk simultaneously.

Methods

How did they test that hypothesis? From the abstract:

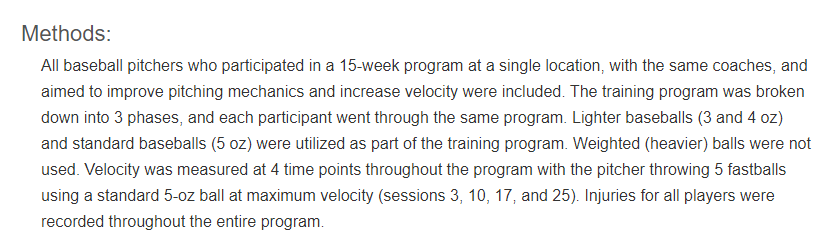

One of the best parts of this study was how well they documented and outlined the phases and twenty-five training sessions that the pitchers went through during this program. There are detailed appendices detailing the exercises and workouts that the players performed throughout the study.

It’s important to note that in the Methods section, the authors explain that “weighted (heavier) balls were not used.” This is true for the baseball throwing portion of the workouts; no one used anything heavier than a 5 oz baseball. However, in the first and second phases of the fifteen-week program, there was a “velocity program medicine ball routine” that consisted of “light medicine ball routines” and “heavy medicine ball routines”.

Here is a description of the light medicine ball routine: “This routine uses 2- and 4-lb medicine balls to execute ‘over-the-head’ throws with both arms.” The rest of the appendix goes on to detail the various light medicine ball drills that were used, consisting of a variety of constrained two-handed throws. Now, technically the author’s original statement that heavier balls were not used is still true—no one used anything heavier than a 5 oz baseball during the throwing workout—but heavier balls in the form of 2 and 4 lb medicine balls were still thrown, albeit with two hands.

This brings up my biggest contention with the methodology in this paper: there was no control group. On top of that, there were a variety of training methodologies that the pitchers went through during this fifteen-week program:

- active warm-up + stretching routine

- consisted of lunges, high knees, butt kicks, squat jumps, and other dynamic stretches

- heavy and lightweight medicine ball routines

- pre-catch velocity program throwing routines

- long toss routines

- shuffle throws

- walking with step behinds

- jogging with step behinds

- pitching routines and general catch play

- pulldowns

- This is where the various lighter weight balls came into play, with participants throwing 5 oz, 4 oz, and 3 oz balls.

- flat ground and “feel work”

- Bullpens

Another word for these exercises would be extraneous variables; in other words, any additional variable that was not accounted for that could affect the results of the experiment. In research, typically you are investigating the possible effect of an independent variable (in this case, lightweight baseball training) on the dependent variables (velocity and injury risk). To scientifically test and investigate this, you typically perform a controlled study where you compare two groups, and the primary difference between groups is the independent variable. This research article did not run a controlled study with a control group. The authors address this in their limitations:

“This study did not have a control group of pitchers who used weighted balls, as we do not believe that these programs should be used based on current evidence showing significant increases in injury rates for players.”

We could write another full blog unpacking that statement alone—and we already have! The current evidence for significant injury risk that they cited is Reinold et al. 2018. OC discussed that study in this blog with a look at Long Term Weighted Ball Research in 2018.

Regardless of that, this study had no control group of pitchers using weighted balls. Going even one step further, I would like to have seen a control group that just did not use the lighter weighted baseballs. If the contention is that you did not believe weighted baseball training was safe for the subjects in the study, a simple solution would have been to split the group in half and have one group follow the lighter baseball training program and the other group perform the training program minus the lighter baseballs.

That is how you could have scientifically proven that lighter baseballs were the reason that they gained velocity and did not get injured. Because they did not do that, I believe it’s scientifically impossible and unfair to make the claim that they made in the results and the conclusion, attributing velocity and reduction of injury risk to the lighter baseballs. Changes in velocity and reduction of injury risk could have been attributed to any of the extraneous variables above.

The Results and Discussion

I’m getting ahead of myself a bit here, so let’s tackle the results and discussion specifically, where I have some other contentions with the paper.

First, four players were excluded from analysis, one due to no baseline velocity data, another who did not complete the program due to a broken ankle suffered while playing basketball at home, and a third because he moved before the completion of the program. These are three very reasonable criteria for not including their data in the analysis. However, the fourth player that wasn’t included in the study “experienced biceps tendon soreness after participating in back-to-back showcases before the training program was completed against recommendations.”

This is where I have a problem. To me, this does not meet the criteria for exclusion and very closely borders the line of suppressing evidence and cherry-picking data. This is a form of confirmation bias where one ignores a critical data point because it does not confirm their hypothesis. At the very least, I’m glad that the authors mentioned why they excluded that data point.

I’d be willing to let it slide if there were additional commentary about how every other subject did not participate in “back-to-back showcases.” If others also participated in back-to-back showcases, their data also needs to be excluded from the analysis. I think it’s safe to assume that some (maybe most) of the other subjects participated in showcases in some capacity because the authors would have likely mentioned it if they hadn’t.

This would have meant that the showcase attendance was unique to the player that was injured, and so would have seriously helped their argument for exclusion. Let’s say for the sake of argument that five other players had participated in back-to-back showcases during this study; in that situation, all five of them should have been removed from the analysis. You can’t just cherry-pick and remove the one player that got hurt if others participated in showcases as well.

Going even further, I still don’t agree with this being exclusion criteria. The idea here is that the authors are arguing that the showcases had a causal effect on this athlete’s injury. Although coaches may assume that effect exists, to my knowledge, there is no existing research out there claiming that back-to-back showcase participation is related to injury. Because of that, it does not fit exclusion criteria from this study. Throwing injuries are more often chronic as opposed to acute. Beyond that, we don’t know a lot about throwing injuries and the causal nature of them. It’s nearly impossible to pinpoint it to one thing/event.

This is discussed further in the discussion, which I have some contentions with as well. After excluding those four players from analysis, the authors write that “No player sustained a baseball-related injury during the training program.” Later, they take that result and turn it into the claim that this lighter baseball training program did not increase injury risk. Injury prediction and prevention is one of the hardest things to research. I could discuss my thoughts on that further, but I’ll let this thread speak for me, as I think it’s one of the best commentaries on the variety of issues with injury prediction:

Injury prediction is a waste of time.

This area of inquiry has become dangerously pervasive and it damages sport science's reputation. Much misinformation & misinterpretation exists.

Below I've started a list of the many problems with the area – please feel free to add to it.

— Sam Robertson (@Robertson_SJ) August 2, 2019

As mentioned earlier, we know that most throwing related injuries are chronic and most likely due to build up over time and a variety of factors. Because of this, I would like to have seen an attempt at longitudinal looks at injury prevention with these players. This is one aspect of the Reinold et al. paper that was done well; they tracked injuries over the subsequent baseball season. This is a great methodology for baseball training-related research studies that are trying to look at injuries. What happens if a significant percentage of these players experience injuries later in their seasons?

You may argue that that could be the result of other factors, such as in-season workload after the training program was completed—which I agree with! But, this paper cites a study that used subsequent injury tracking in post (where they found that two of the four injuries that occurred in connection with a weighted baseball program happened later on in the season and not during the training program) as the main piece of evidence suggesting that weighted baseball training leads to a “significant increase in injury rates for players.” If the main piece of evidence for not using weighted baseballs in this study due to injury risk used a longitudinal study, I would have figured that the authors would use that same methodology to investigate their hypothesis, but they did not.

The other primary result of the study was that velocity increased over time. To be more specific, the mean change in velocity by the end of the program was 4.8 mph, and velocity increased for all but one player. That’s tight! As mentioned later in the discussion, “Fastball velocity is an important metric used by many to evaluate and grade baseball pitchers.” However, the discussion also goes on to argue that:

“Our hypotheses were confirmed, as the use of lighter baseballs (3 and 4 oz) was effective in increasing fastball velocity but did not cause any injuries in the pitchers who participated in the program.”

Based on the methodology of this research, I don’t believe that this claim holds weight. I’ve already alluded to most of my contentions with this claim throughout this review. Mainly, I believe this is a baseless statement because of the lack of a control group in this study.

Let’s take a look at the logic of the study.

- Lighter baseballs were used in this training program.

- Velocity increased.

- No one was injured during this study.

I can see how easy it is to look at those three bullet points and say, “Lighter baseballs increase velocity and do not cause injuries.” But if you’re going to make that claim, all of the other things done during training have just as much of an argument for increasing velocity and reducing injury risk—the active warm-up, the light medicine ball work, the heavy medicine ball work, the long toss, the pulldowns, etc.

Additionally, the average age of the pitchers was 14.7 years old (from age 11 to 17). It seems more than likely that nearly four months of growth and aging could have benefitted velocity. This is another example of where a control group would have helped investigate the researchers’ hypothesis.

In the methodology section, the authors point out that the training program took place during the winter months. There’s no commentary on the training history of the pitchers going into this. There were two training sessions before the baseline velocity recording. More than likely, players took time off after their summer/fall season and then started to get back into winter training around the beginning of the study.

It’s possible that their initial baseline velocity was lower than their typical throwing velocity because of this, and the training program may have been effective only as a result of getting the pitcher back in shape.

Conclusion

The authors concluded that:

“A 15-week pitching training program with lighter baseballs significantly improved pitching velocity without causing any injuries, specifically to the shoulder or elbow. Lighter baseballs should be considered as an alternative to weighted baseballs when attempting to increase a pitcher’s velocity.”

This conclusion is true.

But, as I’ve summarized with all of my contentions laid out in this review, it’s more complicated than that. I appreciate the work that went into this research, but as with most research and papers, it has limitations, some of which the authors addressed. After reading their article, I would not feel confident in saying that, scientifically, “Training with lighter baseballs increases velocity without increasing the injury risk.”

Ultimately, this is just another lesson in the importance of reading research articles critically.

Without reading the rest of the paper, it would have been easy to take in the title of this paper along with a quick scan of the abstract and think that training with lighter baseballs increases velocity without risk of injury. As I’ve hopefully made clear, you can see that the interpretation of the results of the study is more complex and nuanced than that.

By Anthony Brady

For more commentary on this paper specifically, check out Episode 9 of the Driveline R&D Podcast, where we breakdown papers and things like this regularly!

Comment section